Most AI projects do not fail because the model is weak.

They fail because the model is disconnected.

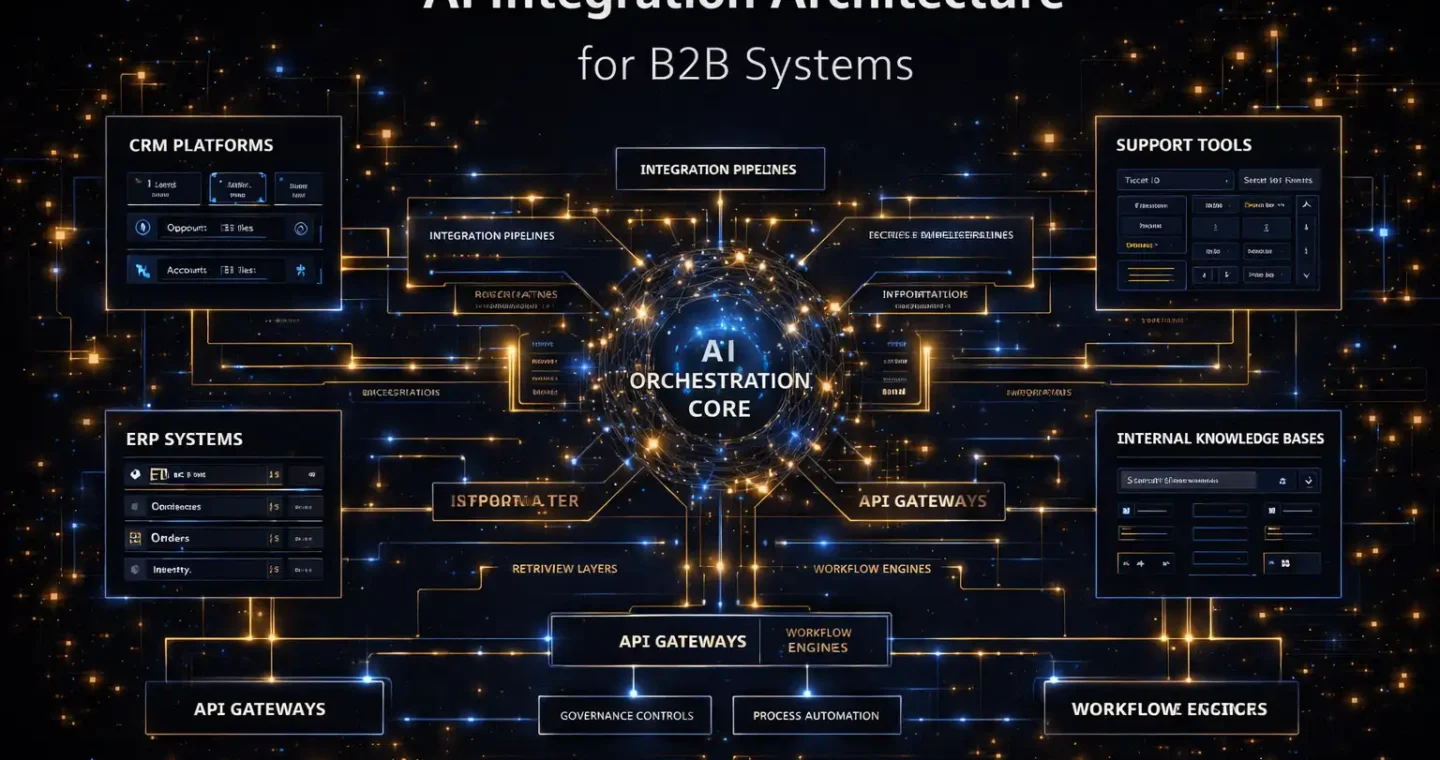

It can generate text, summarize documents, or answer questions in a demo. But once it enters a real B2B environment, the gap becomes obvious. The AI does not know where the source of truth lives. It does not understand system boundaries. It cannot reliably move across CRM data, internal documents, support systems, approval workflows, and operational databases without a clear architecture behind it.

That is why AI integration matters.

For a B2B company, the real challenge is rarely “How do we access a model?” The harder question is how to connect AI to the systems, workflows, and data environments that actually run the business. IBM’s definition of enterprise application integration is useful here because it focuses on connecting disparate applications and data across environments using APIs and middleware to reduce silos and improve process flow. AWS makes a similar point in its enterprise generative AI guidance, emphasizing layered architectures and production-ready design patterns rather than isolated experiments.

That is the real context for AI integration architecture.

It is the blueprint that determines whether AI becomes operationally useful or just technically impressive.

- What AI integration architecture actually means

- Why B2B systems make this harder

- The real purpose of AI integration architecture

- The core layers of AI integration architecture

- Common architecture patterns for AI integration in B2B

- Embedded AI pattern

- Orchestrated workflow pattern

- Knowledge-grounded assistant pattern

- Action-oriented agent pattern

- What a strong B2B AI integration architecture gets right

- Where AI integration projects usually go wrong

- How to design AI integration architecture the right way

- What this means for B2B growth

- FAQ

What AI integration architecture actually means

AI integration architecture is the system design that connects AI capabilities to business applications, data sources, workflows, controls, and user-facing experiences.

That includes:

- where the model sits

- how it receives context

- what systems it can access

- how it retrieves or exchanges data

- what workflow logic surrounds it

- what permissions apply

- how results are monitored and governed

A plain-English definition would be:

AI integration architecture is the structured way a business connects AI to its existing systems so the AI can work with real data, real processes, and real operating constraints.

That is a better definition than simply saying “integrating AI into the business,” because architecture implies design decisions:

- what connects to what

- what happens in what order

- what is allowed

- what is isolated

- what is logged

- what can scale

Those decisions are what separate a production system from a promising prototype.

Why B2B systems make this harder

B2B environments are rarely clean.

Most companies operate across a mix of:

- CRM platforms

- ERP systems

- support tools

- internal documents

- spreadsheets

- APIs

- legacy databases

- cloud apps

- approval workflows

- email-driven processes

IBM describes enterprise integration as using multiple approaches such as API management, application integration, and messaging to connect core business capabilities across diverse IT environments. That is especially relevant in B2B because the environment is usually hybrid by default, not neat by design.

Now add AI.

The moment you do that, the architecture has to answer harder questions:

- Which systems are authoritative?

- What data can the model access?

- Does the AI retrieve information or trigger actions?

- What happens when answers are uncertain?

- What human checkpoints exist?

- How do you prevent sensitive leakage?

- How do you connect modern AI layers to legacy systems without rebuilding everything?

This is why AI integration architecture matters so much more in B2B than in consumer demos.

The real purpose of AI integration architecture

A lot of teams think AI integration is about connecting a model API to an app.

That is only the outer layer.

The real purpose of the architecture is to create a system where AI can operate with:

- the right context

- the right boundaries

- the right reliability

- the right business value

In other words, the architecture is what turns an AI capability into an AI function.

Without integration architecture, AI stays generic.

With integration architecture, AI becomes part of the operating system of the business.

The core layers of AI integration architecture

The cleanest way to understand this is as a stack.

1. Experience layer

This is where users or systems interact with AI.

It may include:

- chat interfaces

- internal copilots

- CRM side panels

- support consoles

- workflow portals

- API endpoints

- automated triggers

Google Cloud’s architecture for orchestrating access to disparate enterprise systems describes conversational and multichannel interfaces routing through an orchestrator into backend systems. That is a useful model because it shows that the interface is only the entry point, not the architecture itself.

The experience layer should answer one question:

Where does the request begin?

2. Orchestration layer

This is one of the most important layers.

The orchestration layer decides:

- what happens after a request arrives

- which tools or systems are involved

- what sequence of steps is required

- whether work is linear, parallel, or delegated

- where control rules apply

Microsoft’s AI architecture guidance on orchestration patterns is especially useful here. It recommends starting with the lowest level of complexity that reliably solves the problem and outlines patterns like sequential orchestration, concurrent orchestration, group-chat style collaboration, and handoff-based delegation.

That principle matters for B2B systems.

Not every integration needs a swarm of agents. Often a simpler orchestrated flow is more reliable, less expensive, and easier to govern.

3. Intelligence layer

This is where the model or models live.

Depending on the architecture, this layer may handle:

- summarization

- extraction

- classification

- reasoning

- drafting

- question answering

- routing assistance

The mistake many teams make is assuming this layer is the architecture.

It is not.

It is one layer inside the architecture.

The model is important, but the model alone is not the system.

4. Context and retrieval layer

For most B2B systems, this is where AI starts becoming useful.

The context layer supplies business-specific information from:

- knowledge bases

- product documentation

- policies

- contracts

- support libraries

- internal repositories

- structured records

AWS frames grounding and retrieval-augmented generation as essential for enterprise trust, accuracy, and explainability because foundation models alone often lack awareness of proprietary data and business rules. Its guidance breaks RAG into retrieve plus generate, grounded in curated enterprise knowledge.

This layer answers:

What information should the AI use before it responds?

5. Integration layer

This is the actual connection fabric between AI and business systems.

It can include:

- APIs

- middleware

- message queues

- event streams

- iPaaS platforms

- file integrations

- connectors

- service buses

IBM defines API integration as using APIs to expose integration flows and connect enterprise applications, systems, and workflows for the exchange of data and services. Its iPaaS guidance also highlights the need to connect apps and data across public cloud, private environments, and on-premises systems.

This is the layer that determines whether AI can move from output to action.

6. Governance and security layer

This layer is what keeps the architecture safe enough for real operations.

It includes:

- access controls

- audit logs

- permissions

- data restrictions

- approval gates

- response validation

- incident handling

- monitoring

Even when enterprise guidance focuses more on architecture than policy, the underlying pattern is the same: AI must operate inside system boundaries, not outside them. AWS’s enterprise-ready layered approach explicitly includes control-oriented layers around infrastructure, models, tooling, and operations.

This layer answers:

What is allowed, by whom, and under what conditions?

Common architecture patterns for AI integration in B2B

Not every system should be designed the same way.

A strong AI integration architecture usually follows one of a few recurring patterns.

Embedded AI pattern

In this model, AI is embedded inside an existing system.

Examples:

- AI inside a CRM workspace

- AI inside a support console

- AI inside document processing software

This works well when the workflow already has a natural home and the AI is there to assist, summarize, classify, or recommend.

The advantage is adoption.

Users stay inside the tools they already use.

Orchestrated workflow pattern

In this pattern, AI sits inside a multi-step workflow.

For example:

- an intake form triggers enrichment

- the AI classifies the request

- the workflow routes it

- an approval step follows

- the system writes back to a CRM or task tool

This is often one of the best B2B patterns because it combines AI with workflow logic instead of expecting the model to do everything.

Knowledge-grounded assistant pattern

This pattern is built around retrieval and grounded answering.

It is common for:

- internal knowledge assistants

- support copilots

- proposal support tools

- operations Q&A systems

AWS and Google Cloud both position retrieval and grounding as central to enterprise AI architectures where models need current, domain-specific information.

Action-oriented agent pattern

This is more advanced.

The AI not only retrieves or generates content. It also invokes tools, updates systems, or coordinates across applications.

Google Cloud’s agentic architecture example shows an orchestrator that unifies access to multiple enterprise systems through a conversational interface, reducing point-to-point system sprawl and operator context switching. Microsoft’s orchestration guidance similarly shows where more complex agent patterns become useful, but only when the complexity is justified.

This pattern can be powerful, but it requires much stronger controls.

What a strong B2B AI integration architecture gets right

The strongest architectures are not defined by the fanciest diagram.

They are defined by good decisions.

Clear source-of-truth design

The architecture knows where reliable data lives.

It does not let the AI invent authority where none exists.

Separation between reasoning and execution

Many systems work better when the AI interprets, drafts, or recommends first, and a separate workflow or rule layer decides what actions are allowed.

Controlled access to systems

The model should not have unlimited access to every tool just because integration is technically possible.

Reusable integration logic

If every AI use case creates new one-off connectors, the architecture becomes fragile fast. IBM’s enterprise integration guidance is especially relevant here because it emphasizes reusable integration approaches across multiple systems and services.

Observability

You need to know:

- what context was used

- what systems were accessed

- what output was produced

- what action followed

- what failed

Without that, the architecture may work in demos but break under accountability.

Where AI integration projects usually go wrong

This is the part that matters most in practice.

They start with the model, not the workflow

A team chooses a model first, then looks for somewhere to put it.

That usually leads to weak fit.

They ignore the data layer

If the AI cannot access clean, relevant, current business context, the architecture will produce weak outputs no matter how strong the model is.

They overcomplicate orchestration too early

Microsoft’s guidance is very clear: use the lowest complexity level that reliably meets the requirement. Too many teams jump into multi-agent or highly dynamic architectures before proving a simpler sequence or orchestration flow.

They treat integration like a side task

In reality, integration is the hard part.

“There is no AI without integration” is how IBM framed the launch of its hybrid integration approach for the AI era, and that line is unusually accurate for enterprise systems.

They forget legacy realities

B2B companies often need AI to work across old and new systems at the same time. If the architecture assumes a perfect greenfield environment, it usually fails the moment it meets the real stack.

How to design AI integration architecture the right way

A stronger process usually looks like this.

Start with one operational use case

Not “we need AI integration.”

Instead:

- internal knowledge assistant

- support triage flow

- proposal enrichment workflow

- CRM summary pipeline

- document intelligence workflow

Map the systems involved

List:

- system of record

- input systems

- output systems

- APIs

- documents

- event triggers

- human checkpoints

Decide what the AI layer should and should not do

Should it:

- summarize

- classify

- retrieve

- recommend

- route

- trigger actions

- draft outputs

Draw the boundary early.

Choose the lightest orchestration pattern that works

This is where Microsoft’s orchestration guidance is especially useful. Sequential flows, concurrent analysis, handoffs, and multi-agent collaboration all have real use cases, but complexity introduces coordination overhead, latency, and cost.

Build governance into the architecture, not outside it

Permissions, approvals, logging, and escalation paths should not be an afterthought.

What this means for B2B growth

A strong AI integration architecture does more than connect systems.

It changes how scalable the business becomes.

Because once AI is connected properly to context, workflows, and system actions, it can support:

- faster support operations

- better knowledge access

- cleaner CRM execution

- smarter internal workflows

- better document-heavy processes

- more consistent cross-system coordination

That is the real value.

Not “AI for the sake of AI.”

But AI as a connected layer inside the business system.

That is why architecture matters so much here. It decides whether AI becomes another disconnected tool or a usable part of the company’s operating model.

And for B2B companies, that decision is often the difference between experimentation and advantage.

If your team is designing AI that needs to work across real systems, real data, and real operational constraints, our AI integration services are built to help turn that into an architecture that actually holds up.

FAQ

What is AI integration architecture?

AI integration architecture is the system design that connects AI models to business applications, data sources, workflows, APIs, and controls so AI can operate in a real enterprise environment. It is broader than model access because it includes orchestration, context, system boundaries, and governance.

Why is AI integration important for B2B systems?

B2B systems usually span multiple tools, data sources, and operational environments. AI only becomes useful when it can work with those systems in a controlled and reliable way rather than remaining isolated in a standalone interface.

What are the main layers of AI integration architecture?

A practical architecture often includes an experience layer, orchestration layer, intelligence layer, context or retrieval layer, integration layer, and governance layer. Enterprise guidance from Microsoft, AWS, Google Cloud, and IBM all point toward layered or multi-component system design rather than a single-model approach.

What is the difference between AI integration and workflow automation?

Workflow automation focuses on moving work through predefined process logic. AI integration is broader because it connects AI capabilities to systems, data, workflows, and controls so the AI can interpret, retrieve, generate, or act within business processes.

What is a good first AI integration use case?

A strong first use case is usually narrow, high-value, and connected to an existing workflow, such as internal knowledge assistance, support triage, proposal enrichment, or document intelligence. These use cases make it easier to define the required systems, data, and controls before expanding.