Retrieval-augmented generation, usually shortened to RAG, is an AI pattern that improves model answers by retrieving relevant external information at runtime and injecting it into the prompt before the model responds. Google Cloud describes RAG as a framework that combines retrieval systems with large language models, while AWS defines it as a way to optimize LLM output by referencing authoritative knowledge outside the model’s training data.

That sounds technical, but the business meaning is simpler.

RAG helps an AI system answer with your company’s knowledge, not just what the model learned during training. In enterprise settings, that usually means grounding answers in internal documents, product data, policies, support content, research libraries, or operational records. IBM describes RAG as an architecture that connects generative AI to external knowledge bases so responses can be more relevant, current, and domain-specific without retraining the model.

This matters because most businesses do not need a model that sounds smart in general. They need a system that can answer accurately about their information.

That is where RAG becomes useful.

- What RAG actually means

- Why enterprises use RAG

- How RAG works

- RAG vs fine-tuning

- RAG vs search

- The main components of an enterprise RAG system

- Why RAG is valuable for enterprises

- Common enterprise use cases for RAG

- What makes enterprise RAG hard

- How enterprises should implement RAG

- RAG is not a silver bullet

- What RAG really means for enterprise AI

- FAQ

What RAG actually means

A plain-English definition looks like this:

RAG is a system that retrieves relevant information from approved data sources and uses that information to help a language model generate a better answer.

Instead of relying only on the model’s built-in knowledge, the system first searches a connected knowledge source. Then it feeds the most relevant context into the model. After that, the model generates a response based on both the user’s request and the retrieved material. Microsoft, Google Cloud, IBM, and OpenAI all describe RAG in essentially this same pattern: retrieval first, grounded generation second.

So when someone asks, “What is RAG?” the most useful business answer is:

RAG is the layer that helps AI answer with grounded business context.

Why enterprises use RAG

Enterprise teams usually hit the same problem with large language models.

The model may write well, summarize well, and sound confident. However, it does not automatically know:

- your internal policies

- your latest product details

- your contracts

- your private knowledge base

- your current support documentation

- your company-specific terminology

That gap is exactly why RAG has become a standard enterprise pattern. Microsoft describes it as an industry-standard approach for building applications that need to process proprietary or domain-specific information the model does not already know. IBM and AWS make the same point from a different angle: RAG gives LLMs access to current, authoritative, domain-specific knowledge without the cost of retraining.

In practice, enterprises use RAG because it can improve:

- answer relevance

- answer freshness

- domain accuracy

- source traceability

- trust in AI responses

How RAG works

At a high level, most RAG systems follow three core steps.

Retrieval

The system receives a user query and searches an external knowledge source for relevant information. That source may be a vector database, full-text search index, document repository, SQL database, or a hybrid search stack. Azure Databricks and Microsoft both describe retrieval as the first step in a standard RAG flow.

Augmentation

The system combines the retrieved material with the original user query. This creates a richer prompt with supporting context. OpenAI describes RAG as injecting external context into the prompt at runtime, while Google Cloud describes this as grounded generation based on retrieved information.

Generation

The model generates a response using the question plus the added context. If the retrieval step is strong, the answer is usually more specific, more useful, and better aligned to enterprise information. IBM, AWS, and Microsoft all describe this grounding step as central to RAG’s value.

That is the core loop.

Simple in concept. Much harder in implementation.

RAG vs fine-tuning

This is one of the most important distinctions for business buyers.

Fine-tuning changes the model.

RAG changes the context the model receives.

AWS and IBM both position RAG as a cost-efficient way to adapt model outputs to domain-specific use cases without retraining the model on internal data.

That is why many enterprise teams start with RAG before considering fine-tuning.

RAG is often the better option when:

- information changes frequently

- private documents are involved

- the goal is grounded Q&A or knowledge assistance

- the company wants source-aware answers

- retraining would be too costly or too slow

Fine-tuning may still have a role. However, it solves a different problem. It is better for changing task behavior or response style. RAG is better for supplying relevant information at inference time.

RAG vs search

RAG is not just “search with a chatbot.”

Search returns documents or links.

RAG retrieves relevant content and then uses that content to help the model generate a synthesized answer.

That difference is important.

A search system helps users find the source.

A RAG system helps users get an answer grounded in the source.

The strongest enterprise solutions often combine both:

- strong search and retrieval

- clear source visibility

- answer generation with grounding

- citations or links back to original materials

IBM explicitly notes that RAG systems can include citations to knowledge sources in responses, which improves verification and trust.

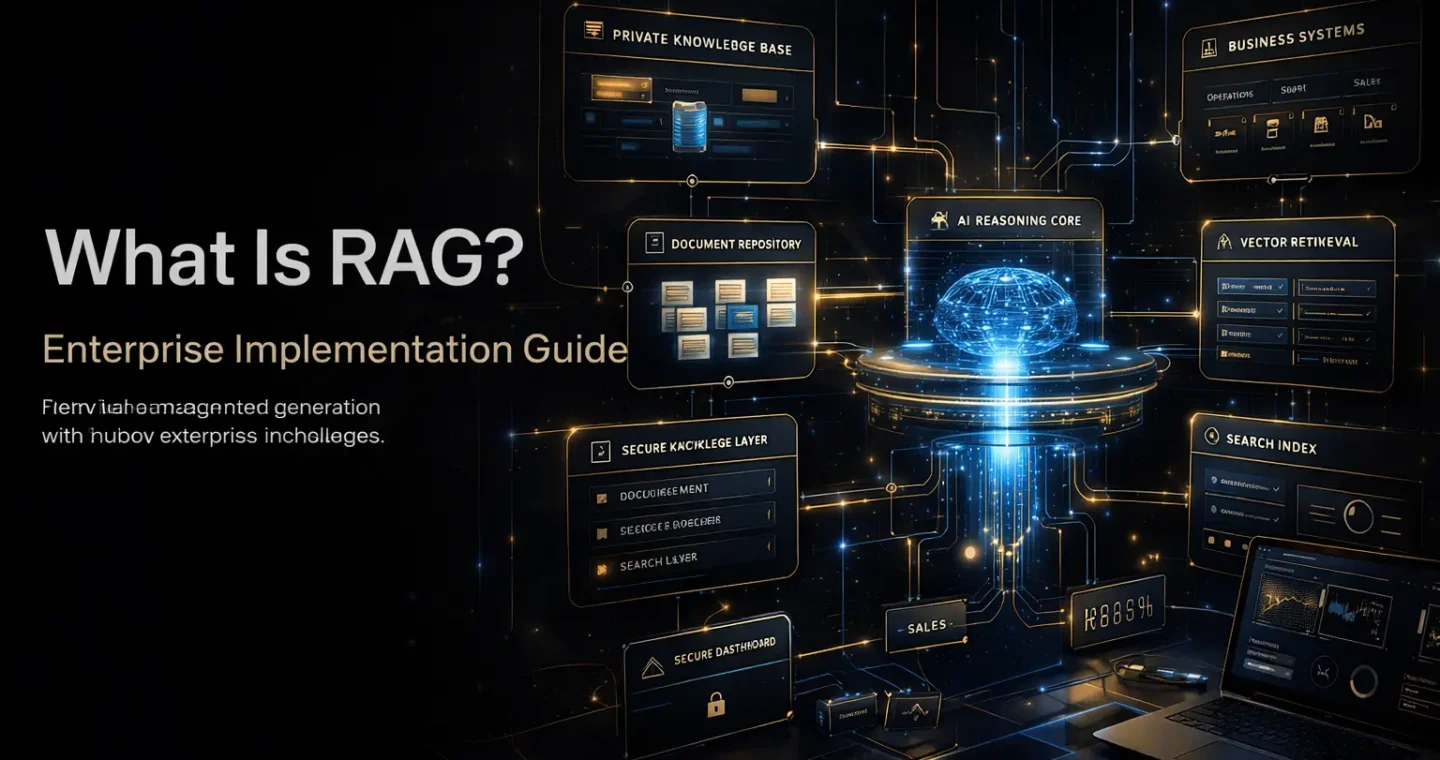

The main components of an enterprise RAG system

A real enterprise RAG system is more than a model plus some files.

Knowledge sources

These are the approved sources the system can use.

Examples include:

- product documentation

- support articles

- policy libraries

- contracts

- internal wikis

- CRM notes

- research archives

- standard operating procedures

The quality of these sources matters. If the underlying content is outdated, duplicated, or badly structured, RAG quality will suffer.

Ingestion pipeline

Before retrieval works well, documents usually need to be collected, cleaned, chunked, enriched, indexed, and refreshed. Microsoft’s RAG design guidance emphasizes preparation steps such as defining the domain, gathering documents, analyzing content, and selecting evaluation queries before implementation.

Retrieval layer

This is the mechanism that finds relevant material. It may use:

- vector search

- keyword search

- hybrid search

- metadata filters

- reranking

Azure guidance specifically points to decisions like chunking strategy, embedding choice, search configuration, and whether to use vector, full-text, hybrid, or multiple retrieval methods.

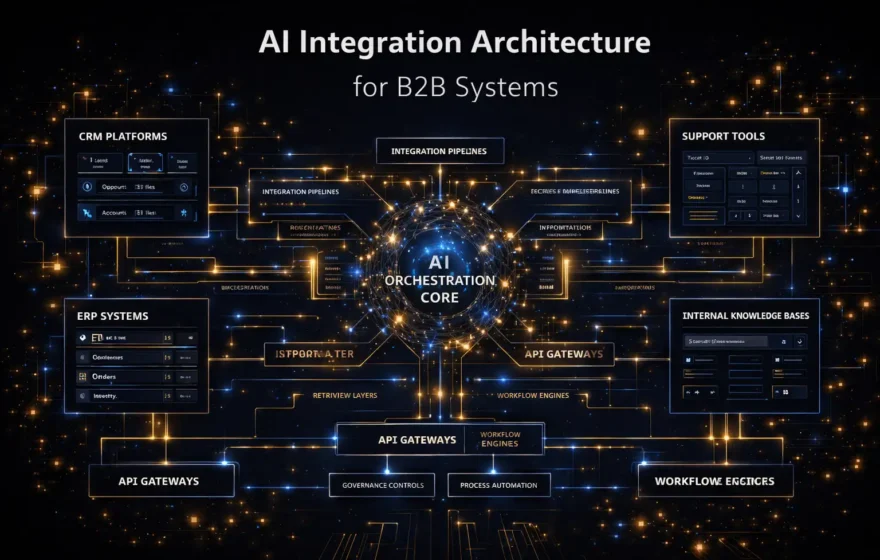

Orchestration layer

This layer handles the application flow:

- receives the user query

- runs retrieval

- assembles the prompt

- applies policies

- sends the request to the model

- formats the output

Microsoft’s secure multitenant RAG guidance describes an orchestration layer that fetches authorized grounding data and passes it to the model as context.

Generation layer

This is the model that writes the response using the grounded prompt.

Security and access control

Enterprise RAG is not just about relevance. It is also about who is allowed to see what. Microsoft’s multitenant guidance makes this explicit: only authorized users should be able to ground responses on the information they are permitted to access.

That means permissions are not optional. They are part of the architecture.

Why RAG is valuable for enterprises

RAG becomes valuable when a business needs AI outputs to be tied to real internal knowledge.

The biggest enterprise benefits are usually these.

More relevant answers

Because the model receives context tied to the question, it is more likely to return a useful answer for the actual domain. Google Cloud, Microsoft, and IBM all frame RAG around improved relevance and grounded output.

More current answers

A model’s training data has a cutoff. RAG can retrieve more current information from connected sources. IBM and AWS both highlight this as a major advantage.

Better use of private data

RAG lets enterprises use internal knowledge without retraining the model on that data. That often makes implementation faster and more controllable.

Lower hallucination risk

RAG does not eliminate hallucinations. Still, grounding answers in retrieved information can reduce them when the retrieval quality is good. IBM, AWS, and Azure all position grounded responses as more accurate and reliable than purely generative answers.

Better trust and verification

When users can see the source or citation behind an answer, adoption tends to improve. IBM explicitly calls out citations as a trust advantage of RAG systems.

Common enterprise use cases for RAG

RAG is most useful when the business problem is knowledge-heavy.

Internal knowledge assistants

Employees ask questions about company policies, internal procedures, product details, or operational guidance. The RAG system retrieves the right material and generates a grounded answer.

Support and service enablement

Support teams use RAG to pull answers from updated documentation, policies, and troubleshooting content so responses are faster and more consistent.

Sales enablement

RAG can help surface approved product information, pricing rules, case-study details, and competitive context for proposals or account preparation.

Document-heavy operations

Legal, procurement, compliance, and finance teams often work with large amounts of structured and unstructured text. RAG can help interpret, summarize, and retrieve the right context more efficiently.

Research and analysis workflows

RAG is strong when users need answers based on a known corpus of documents rather than only general model knowledge. Google Cloud, IBM, and OpenAI all point to enterprise search, internal knowledge, and file-based retrieval as strong RAG applications.

What makes enterprise RAG hard

RAG sounds simple in a diagram. In production, it is much more demanding.

Bad source content

If the knowledge base is outdated, duplicated, low-quality, or poorly organized, the system will retrieve weak context.

Weak chunking

If documents are split badly, the retriever may miss the right context or return fragments that lack meaning. Microsoft’s RAG guidance specifically calls chunking strategy a major design consideration.

Poor retrieval

If the system cannot retrieve the right material, the model will still answer, but the answer may be wrong, vague, or misleading.

Missing permissions

This is a serious enterprise risk. A RAG system that retrieves unauthorized content is not ready for production. Microsoft’s secure multitenant RAG guidance focuses heavily on enforcing authorized access to grounding data.

Weak evaluation

A RAG system can look impressive in demos and still fail in real usage. Microsoft’s architecture guidance recommends a rigorous, scientific approach to design, experimentation, and evaluation rather than assuming the basic pattern is enough.

How enterprises should implement RAG

The best RAG implementations are not the ones with the flashiest demos. They are the ones that are scoped, tested, and governed correctly.

Start with a defined business use case

Do not begin with “we want RAG.”

Start with:

- internal policy Q&A

- support knowledge assistant

- proposal knowledge retrieval

- contract intelligence support

- product documentation assistant

That gives the project a measurable target.

Define the source of truth

Know exactly which data the system is allowed to use. If the content is not trusted, the answers will not be trusted either.

Design retrieval before prompt polish

Prompt engineering matters, but retrieval quality matters more. A beautifully written prompt cannot rescue weak retrieval.

Build evaluation early

Microsoft’s RAG solution design guidance emphasizes experimentation and evaluation throughout the process. That is the right approach. Measure:

- retrieval relevance

- answer faithfulness

- citation quality

- user trust

- business usefulness

Add access control from day one

Security should not be a later phase. Enterprise RAG needs role-aware data access, tenant isolation where relevant, and clear governance over which sources can be used.

Keep human review for high-risk workflows

If the output affects compliance, contracts, finance, or customer-facing commitments, human oversight should stay in the loop.

RAG is not a silver bullet

This is important.

RAG improves grounded answering. It does not automatically solve:

- poor data governance

- missing documentation

- broken internal search

- unclear ownership of knowledge

- weak access controls

- unrealistic expectations about accuracy

OpenAI describes RAG as injecting external context at runtime to improve relevance and accuracy, which is true. But that does not mean every enterprise AI problem should become a RAG project.

Sometimes the right answer is:

- better search

- better content operations

- cleaner data architecture

- narrower workflow automation

- stronger integrations

RAG is powerful when the use case really needs grounded language generation.

What RAG really means for enterprise AI

The best way to think about RAG is this:

It is the bridge between a general-purpose model and a company’s real knowledge.

Without that bridge, AI may sound capable but remain too generic.

With that bridge, AI becomes much more useful for actual business work.

That is why RAG matters in enterprise implementation. It is not just a technical pattern. It is one of the most practical ways to turn AI from a general assistant into a business-aware system.

For companies that want AI to work with real internal knowledge, not just internet-scale general knowledge, RAG is often the first architecture that makes the project commercially meaningful.

And when it is designed properly, it becomes more than a chatbot feature. It becomes a knowledge layer that can support support teams, operations, sales, research, and decision-making across the business.

If your team is exploring grounded AI systems that connect models to real business data, our AI integration services are built for that kind of implementation.

FAQ

What is RAG in simple terms?

RAG, or retrieval-augmented generation, is an AI approach that retrieves relevant external information and adds it to the prompt before a language model generates an answer. That makes responses more grounded, relevant, and context-aware.

Why do enterprises use RAG?

Enterprises use RAG to connect AI systems to private, current, and domain-specific knowledge without retraining the model. This helps improve relevance, freshness, and trust in generated answers.

Is RAG the same as fine-tuning?

No. Fine-tuning changes the model itself, while RAG improves answers by supplying external context at runtime. They solve different problems. RAG is often preferred when information changes frequently or lives in private enterprise systems.

What are the main components of a RAG system?

A RAG system usually includes a knowledge source, ingestion process, retrieval layer, orchestration layer, generation model, and access controls. Enterprise implementations also need evaluation and governance.

Does RAG eliminate hallucinations?

No. RAG can reduce hallucinations by grounding answers in retrieved information, but it does not remove the risk completely. Retrieval quality, data quality, and system design still matter.